Facebook has announced that it is stepping up its fight against child abuse. The app has outlined some new measures to better detect and remove content that exploits children, including updated warnings, improved automated alerts and new reporting tools.

The social media giant recently conducted a study of all the child exploitative content in order to determine the reasons behind such sharing, with a view to improve its processes.

Their findings showed that many instances of such sharing were not malicious in intent. However, the damage caused by such content still poses a significant risk. The company found that more than 90% of the content was the same or similar to previously reported content.

The first new alert is a pop-up that will be shown to users who try to search for words tied to child sexual abuse content, urges them not to view these images and to get help.

This is designed to address incidents where users may not be aware that the content they're sharing is illegal and could pose a risk to the children involved.

The second alert type is more serious, informing people who have shared child exploitative content about the harm it can cause. The safety alert tells users that if they share this type of content again, their account may get disabled.

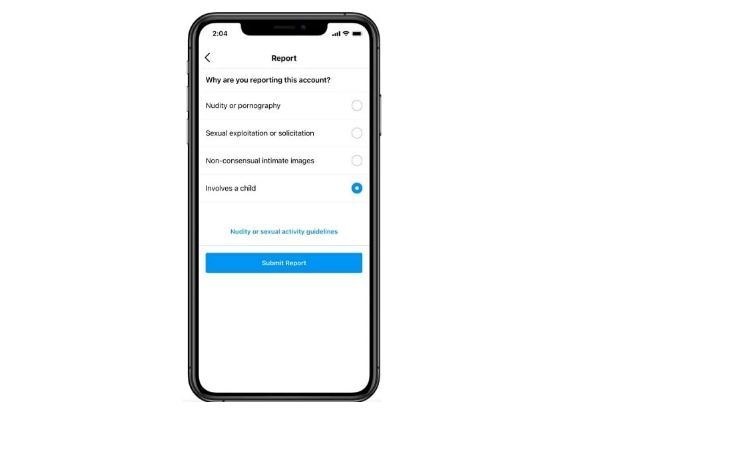

Facebook has also improved its user reporting flow for such violations, which will also see such reports prioritized for review.

Comments are closed on this story.